South Side Science Fair

Checkers at MSI

Checkers at MSI

Duckietown at MSI

McDade students visit TTIC

Summer 2022 visiting students

Chip explains how Baxter solves a Rubik's Cube

Baxter solves a Rubik's Cube at MSI

Checkers at MSI

Duckietown Autolab at TTIC

Robot Block Party at MSI

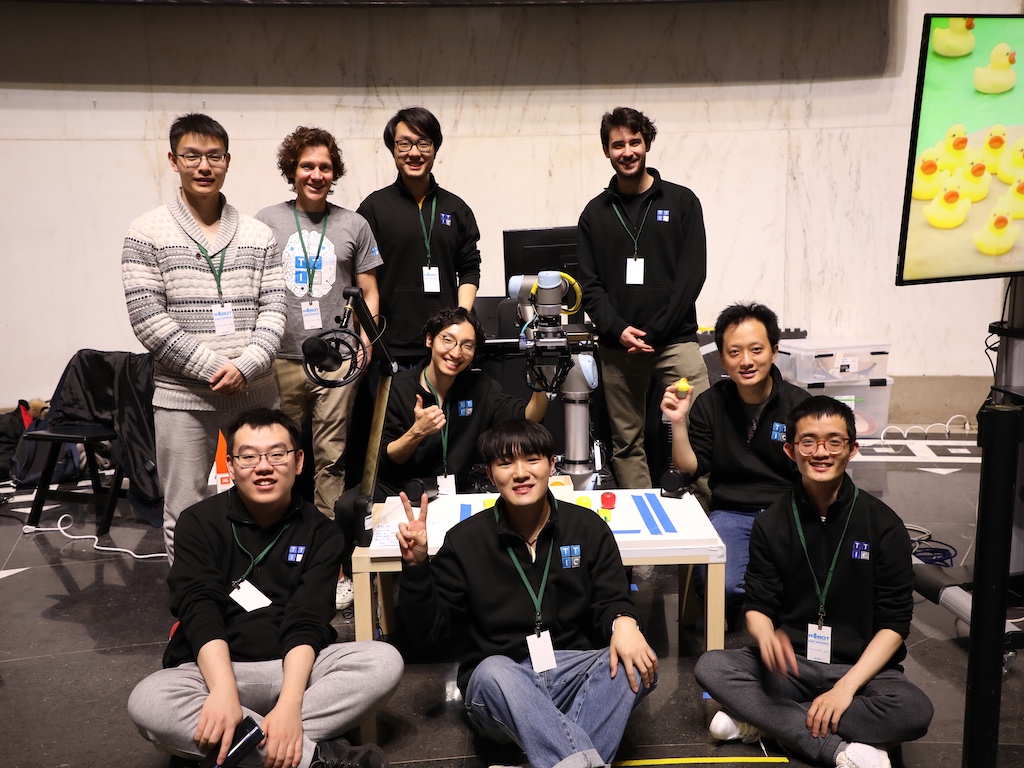

Building Duckiebots (incidentally, the three people here each now work at different self-driving companies)

Checkers at UCLS

The Robot Intelligence through Perception Lab (RIPL) at TTI-Chicago develops intelligent, perceptually aware robots that are able to work effectively with and alongside people in unstructured environments.

RIPL is directed by Professor Matthew R. Walter. Our research focuses on advanced perception algorithms that endow robots with a rich awareness of their surroundings and the ability to interact safely and naturally with humans. We are particularly interested in algorithms that take as input multi-modal observations of a robot’s surround (e.g., laser range data, image streams, and speech) and infer properties of the objects, places, people, and events that comprise a robot’s environment.

We are looking for talented PhD students who are excited about computer vision, natural language understanding, and machine learning for robotics. If you are interested in joining us, consider applying to TTI-Chicago.

We are also looking to host students who are enthusiastic about robotics as part of TTI-Chicago’s Visiting Student Program, and encourage you to apply.

News

| September 2025 |

Jiading Fang received his diploma and is off to Waymo. Congrats! |

|---|---|

| June 2025 |

Congrats to Teddy Ayalew for his paper on learning task-agnostic reward functions from human videos and to Shengjie Lin for his paper on learning high-fidelity 3D models of articulated objects, both accepted to ICCV 2025! |

| May 2025 |

We are hosting the 2025 Midwest Robotics Workshop that brings together nearly 200 roboticists from the midwest! |

| April 2025 |

Our lab is excited to participate in the Robot Block Party event at the Museum of Science and Industry |

| December 2024 |

Congratulations to Jiading Fang and Shengjie Lin for having their paper on scaling NeRFs to large environments via fusion accepted at ISRR 2024. |

Older news is available here.